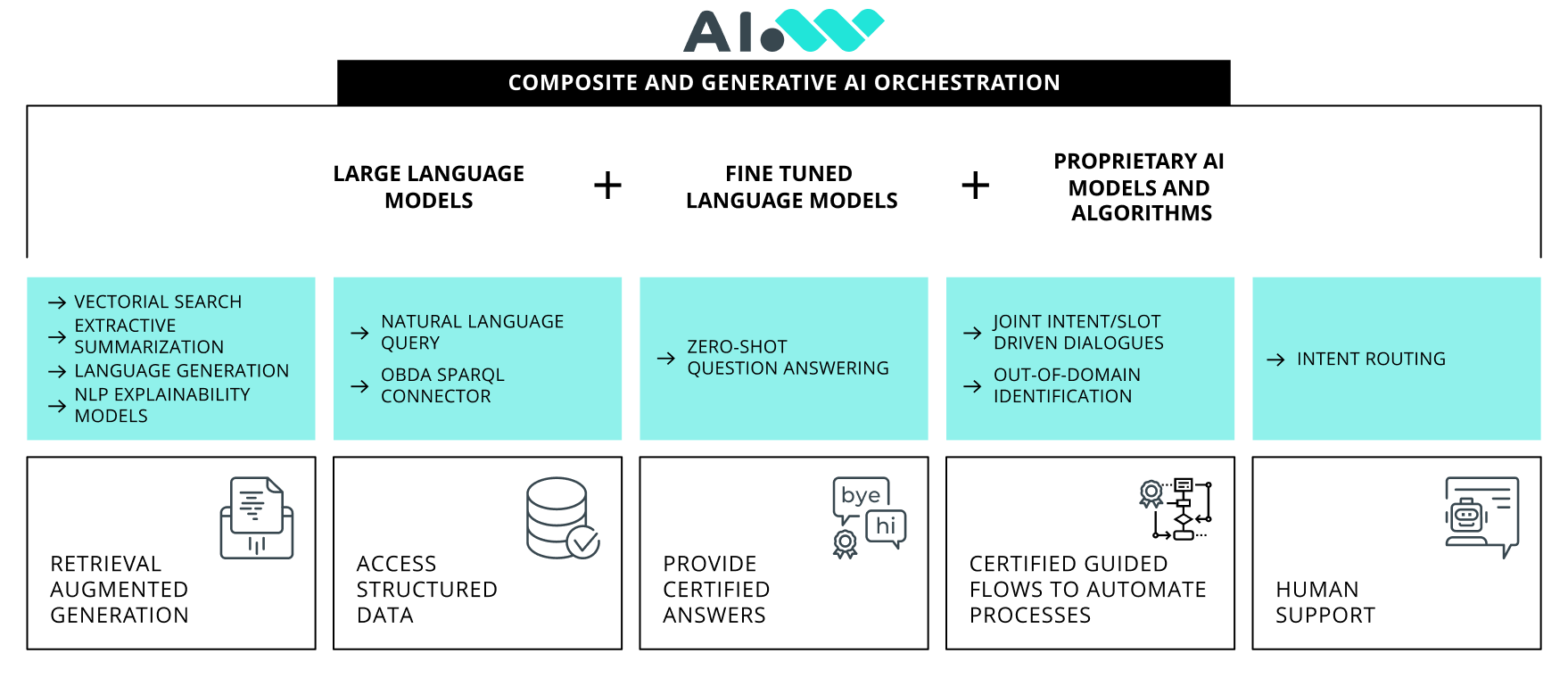

AI Orchestrator

Overcome Generative AI Limitations

LLMs are capable of incredible things but granting proper guidance of their behavior is essential to achieve consistently suitable outcomes and is, therefore, a key factor in the successful integration of AI within business processes.

AI orchestrator works as a guardrail for Generative AI models, enabling the delivery of precise, fitting outputs, both in terms of content and format.

The essenceof AI Orchestrator

AI Orchestrator is an AI algorithm designed to enhance Generative AI implementations in business context. Its capabilities discern, orchestrate, and maintain conversation context across various modules, combining different technologies and approaches.This facilitates the generation of accurate and contextually relevant responses to user queries.

Addressing the Key Challenges of Generative AI

Large Language Models (LLMs) can generate coherent texts from natural language prompts. However, certain issues must be resolved before integrating this technology into business contexts.

Hallucinations

Responses may seem accurate but are often random, unreliable, combinations of words, known as "hallucinations".

1

Misinformation

LLMs always generate an output, irrespective of whether the request needs information beyond the training dataset, resulting in potentially fabricated and false answers.

2

Source Quotation

LLMs lack the capability to provide which sources contributed to the formulation of the response.

3

Retrieval Augmented Generation (RAG) may be used to address these issues. This technique leverages a knowledge repository of company data, enabling generative AI to produce contextually informed responses. While RAG features play a pivotal role in enterprise adoption, they fall short in certain areas.

Certified Responses

RAG tools do not provide predefined and human validated answers. They need the knowledge to generate brand new answers, even for simple and frequently asked questions.

External Sources Access

Accessing external services and structured data in natural language is not a by-design feature in most RAG architectures.

Customized Dialogs

The conversations provided by RAG applications cannot follow any specific pattern.

Human Operatos Integration

In RAG systems, the integration and the intervention of a human support operator within the conversation is not a seamless and trivial operation.

How it works

The AI Orchestrator is a proprietary module that regulates the behavior of systems featuring Generative AI, enabling predetermined outcomes when properly configured.

Requests Pre-classification

The system pre-classifies user requests in order to identify the right agent to involve. It processes the original utterance to improve the retrieval processes, through techniques like query expansion, adding metadata, etc.

Context Preservation

The system ensures continuity and coherence in interactions by keeping track of the conversation history. This allows the system to provide accurate and relevant responses by considering previous exchanges.

Integrations

The AI Orchestrator layer allows for the integration of specialized modules addressing specific requests.

Multiple Sources

A proprietary RAG algorithm provides answers by leveraging knowledge from diverse custom text corpora.

Structured Data

A Natural Language Query (NLQ) model enables users to interact with databases and inquiry structured data.

FAQs Repositories

A zero-shot learning model, powered by a proprietary vector search algorithm, selects the correct answer for specific questions that require a consistent response, leveraging custom FAQs repositories.

External Applications

The algorithm detects when additional data are needed from an external application. It selects the correct API to invoke and verifies that all essential information is provided.

Dialog Flows

A user friendly, graphical tool simplifies the design of traditional dialog flows, leveraging Business Process Modeling Notation (BPMN) and DMN business rules.

Contact Center

A multichannel media bar supports integration with a customer contact center and forwards requests to the appropriate human operator when AI is unable to provide answers.

AIWave Approach

The AI Orchestrator layer is central to the AIWave Composite Generative AI system. It allows to perform AI tasks with various models —individually or in combination— such as LLMs, SLMs, and other machine learning algorithms. It can be operated on-premises and configured for lightweight hardware or specific performance requirements.

The RAG and NLQ layers can generate answers using a fine-tuned SLM, a standard cloud-hosted LLM, or the most relevant text documents, sentences, or paragraphs, extracted from a custom knowledge base by aproprietary summarization algorithms.

For more flexibility, customers have the option to utilize their own cloud-based LLM subscriptions.